I did the Cluster Challenge again this year. Last year was fun, but this year was better – we won! Here’s the story:

We started Saturday morning in Bloomington. The travel went pretty

smooth and Guido picked us up at the airport in Austin. We went directly

to the Conference location. The time before that was very stressful

because the machine wasn’t working quite as nice as we would like to.

The biggest problem was that the we could not change the CPU frequency

of the new iDataplex system. However, we were able to change it on the

older version and saw significant gains. We benchmarked that we could

run 16 nodes during the challenge and use 12 of them for HPCC (while 4

were idle) with our power constraints (2×13 A). So we convinced IBM to

give us a pre-release BIOS update which was supposed to enable CPU

frequency scaling. And it looked good! We were able to reduce the CPU

clock to 2.0 GHz (as on the older systems). However, it was 4am and we

had to ship at 6, so we didn’t have the time to test more. But back to

Austin …

The guys from Dresden were already waiting for us because the organizers

did not allow them to unpack the cluster alone (it was supposed to be a

team effort). We unpacked our huge box and rolled our 900 pound cluster into

our booth.

Our Cluster

Our Cluster

We spent the remaining day with installing the system and pimping (;-))

our booth. It went pretty well. Then we began to deploy our hardware and

boot it from USB to do some performance benchmarks.

Installing the fragile Fiber Myrinet equipment (we didn’t break anything!)

Installing the fragile Fiber Myrinet equipment (we didn’t break anything!)

We started with HPCC and were shocked twice. Number one was that the CPU

scaling that costed us so many sleepless night did not seem to help. All

tools and /proc/cpuinfo showed 2.0 GHz – but the power consumption was

still as high as with 2.5 GHz. So we wrote a small RDTSC benchmark to

check the CPU frequency – it still ran at 2.5 GHz. The BIOS was lying to

us :-(. The second shock was that HPL was twice as slow as it should

be. So much to the sleep …

Quite some time after midnight … still hacking on stuff. I’m trying to motivate (I am a good slave driver) our guys to go on.

Quite some time after midnight … still hacking on stuff. I’m trying to motivate (I am a good slave driver) our guys to go on.

The students tried to fix it … all night long. The conclusion was that

we had to drop our cool booting from USB idea due to the performance

loss. Later, it turned out that shared memory communication uses /tmp

(which was mounted via NFS) and was thus really slow (WTF!). Anyway, we

decided about one hour before the challenge started to fall back to

disks. This worked.

How high can one stack harddrives? Not too high actually ;). Man, this was hard to plug them back into the system.

How high can one stack harddrives? Not too high actually ;). Man, this was hard to plug them back into the system.

The second problem was a tough one. The BIOS … lying to us. We were

finally able to get hold of an engineer from IBM. He tried hard but

couldn’t help us either. So the students had to make the hard decision

to run with two nodes less :-(.

In the meantime, me and Bob had fun while biking in order to power

laptops ;).

Bob Beck (UA) generating power on our fancy machine ;).

Bob Beck (UA) generating power on our fancy machine ;).

I was driving my laptop with the sandwiches I ate before :).

I was driving my laptop with the sandwiches I ate before :).

The Challenge finally starts

The challenge was about to start, the advisors couldn’t do anything

anymore, so we decided to get some fuel from the opening banquet for our

students in the nightshift ;).

Guido and me thinking about getting some good stuff for the students!

Guido and me thinking about getting some good stuff for the students!

We finally found some good stuff on the showfloor *yay*.Advisor’s success!

We finally found some good stuff on the showfloor *yay*.Advisor’s success!

Some of us were not totally up to speed all the time 😉 – It looks like somebody missed the start:

So the Challenge ran, and we had nothing to do (especially the advisers

who were just hanging around to feed and motivate the students). So we

did all kinds of weird things over night – and we had a bike ;).

I also started some coding during the challenge because I didn’t really

do anything but it was way to noisy to work on papers. I had to pose

inside the microsoft booth, while my laptop finished up some cool

things! Thanks to Erez for taking the picture at exactly the right time.

Some Linux-based “research” performed/finished inside the Microsoft booth.

Guido explains Vampir to the other teams on one of our three ultra-cool

41” displays (again, around midinght ;)). We had really nice speakers

at the challenge. Especially on Sunday, when all the others left, we

cranked them up and listened to the soundtrack of Black Hawk down. The

security guys seemed kind of confused to hear really loud base at 4am in

the morning ;).

Guido! Don’t help the “enemies” ;).

Youtube made it also on our display :). And nearly costed us a point by

disturbing the sound output of our power warning system. But Jens

realized it fortunately.

Watch yourself:Â Achmed the Dead Terrorist

Watch yourself:Â Achmed the Dead Terrorist

Oh, and there was this Novell penguin that spontaneously caught fire. I

guess this happens when experienced computer scientists spend two days

to install a completely retarded operating system (with InfiniBand – ask

me about details if you’re interested). I love Linux, but it’s a shame

that the abbreviation SLES has the word “Linux” in it. Debian or Ubuntu

is so much better! But apparently, SLES is better prepared for the

applications (clearly not for administration or software maintenance

though).

Each booth was “armed” with at least one student during all the time.

Here are some images from after midnight.

The MIT booth – doesn’t it look more like Stonybrook?

The MIT booth – doesn’t it look more like Stonybrook?

The folks from Arizona State – they had a neat Cray – with Windows though. But it seems that it worked for them.

The folks from Arizona State – they had a neat Cray – with Windows though. But it seems that it worked for them.

The guys from Colorado with Aspen systems (don’t ask them about their vendor partner).

The guys from Colorado with Aspen systems (don’t ask them about their vendor partner).

The National Tsing Hua University – excellent people but their system was more of a jet engine than a cluster.

The National Tsing Hua University – excellent people but their system was more of a jet engine than a cluster.

Our booth … note the image on the big screen ;).

The Alberta folks – last year’s champions. Darn good hackers!

The Alberta folks – last year’s champions. Darn good hackers!

Purdue with their SciCortex – they seemed rather annoyed all the time.

Purdue with their SciCortex – they seemed rather annoyed all the time.

Our social corner: At 2am, most students didn’t have to do a lot (just

watching jobs). So they all gathered in front of our booth and played

cards :).

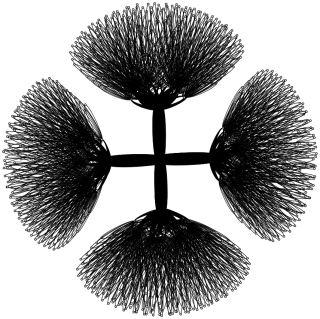

Two fluffy spectators were watching our oscilloscope animation during

the show-off on Thursday.

The team of judges, led by Jack Dongarra, talked to our students to

assess their abilities

After that, we won! We don’t have a picture of our fabulous win yet, but I’ll post it with some more links after I got it.

Our Cluster

Our Cluster Installing the fragile Fiber Myrinet equipment (we didn’t break anything!)

Installing the fragile Fiber Myrinet equipment (we didn’t break anything!) Quite some time after midnight … still hacking on stuff. I’m trying to motivate (I am a good slave driver) our guys to go on.

Quite some time after midnight … still hacking on stuff. I’m trying to motivate (I am a good slave driver) our guys to go on. How high can one stack harddrives? Not too high actually ;). Man, this was hard to plug them back into the system.

How high can one stack harddrives? Not too high actually ;). Man, this was hard to plug them back into the system. Bob Beck (UA) generating power on our fancy machine ;).

Bob Beck (UA) generating power on our fancy machine ;). I was driving my laptop with the sandwiches I ate before :).

I was driving my laptop with the sandwiches I ate before :). Guido and me thinking about getting some good stuff for the students!

Guido and me thinking about getting some good stuff for the students! We finally found some good stuff on the showfloor *yay*.Advisor’s success!

We finally found some good stuff on the showfloor *yay*.Advisor’s success!

Watch yourself:Â Achmed the Dead Terrorist

Watch yourself:Â Achmed the Dead Terrorist

The MIT booth – doesn’t it look more like Stonybrook?

The MIT booth – doesn’t it look more like Stonybrook? The folks from Arizona State – they had a neat Cray – with Windows though. But it seems that it worked for them.

The folks from Arizona State – they had a neat Cray – with Windows though. But it seems that it worked for them. The guys from Colorado with Aspen systems (don’t ask them about their vendor partner).

The guys from Colorado with Aspen systems (don’t ask them about their vendor partner). The National Tsing Hua University – excellent people but their system was more of a jet engine than a cluster.

The National Tsing Hua University – excellent people but their system was more of a jet engine than a cluster.

The Alberta folks – last year’s champions. Darn good hackers!

The Alberta folks – last year’s champions. Darn good hackers! Purdue with their SciCortex – they seemed rather annoyed all the time.

Purdue with their SciCortex – they seemed rather annoyed all the time.