| Home -> Research -> MPI Topologies -> MPIParMETIS | |

| |

Home

Events

Past Events

|

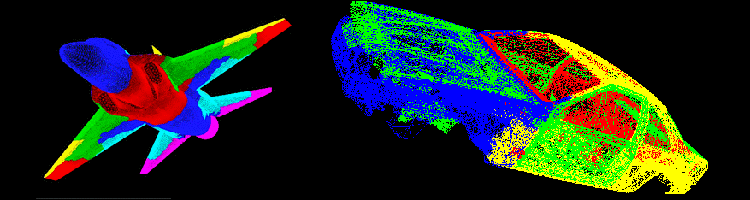

Create MPI-2.2 Graph Topologies from ParMETIS partitions!DescriptionMPIParMETIS is a prototypic library which assists developers of parallel applications to use the scalable graph topology interface in MPI-2.2. The library offers several calls which accept output of the ParMETIS library and create (potentially reordered) MPI-2.2 graph topologies in a scalable way.Download the MPIParMETIS LibraryThese versions of the library are available:

Building the Library

Testing the LibraryThe subdirectory parmetis_test contains the ptest program which is supplied with the ParMETIS distribution. It has been changed to create a graph topology for each of the tests that are run. In order to test the implementation, compile ptest with "make" and run it with different numbers of processes and "rotor.graph" as input file.Using the LibraryCall ParMETIS as described on in the ParMETIS manual. Then pass the input graph specification to MPIX_Graph_create_parmetis_unweighted(). Currently, only unweighted k-way partitioning (ParMETIS_V3_PartKway() and ParMETIS_V3_PartGeomKway()) is supported by the library. The call MPIX_Graph_create_parmetis_unweighted() accepts the parameters:

MPI-2.2 capable MPI ImplementationsMPI-2.2 is a fairly recent standard and there are only few MPI-capable implementations. All tests for the software have been performed with MPICH2 (revision r5551). |

| serving: 216.73.216.144:43584 | © Torsten Hoefler |